Why most AI rollouts stall before they start

Mar 23, 2026

·

4 min read

Denise Bosinceanu

You can't manage what you can't measure, and most teams can't measure AI adoption at all.

Leadership mandated it. You bought it. Now what?

The AI initiative came from the top. The business case was approved, the licenses were purchased, the kickoff meeting happened, and everyone nodded along with genuine enthusiasm, and then it got quiet. Not because people stopped caring, but because nobody could see whether anyone was actually using it.

That’s the gap most organizations don’t talk about openly: the space between buying AI and meaningfully using it. 74% of organizations say they cannot measure business value from their AI initiatives, and that’s not a technology problem or a budget problem. That’s a visibility problem, and it compounds fast when nobody can tell the difference between a rollout that’s gaining traction and one that’s quietly dying.

The opinion loop is real, and it’s killing your rollout

Here’s what managing an AI rollout actually looks like on the ground when you don’t have data to work with. You push the tool to the team, a few enthusiastic early adopters jump in right away, a few others say they’ll get to it when things slow down, and a long tail of people quietly never engage at all, but nobody flags it, because there’s no mechanism to surface it.

So when leadership asks how it’s going, you give your best read of the room. You share the positive signals you’ve heard from early adopters, but the rest of the picture is harder to see. Without clear usage data, silence can mean anything.

That ambiguity creates the opinion loop, where perception replaces measurement. It’s also how a rollout with only 31% of use cases in full production can still be interpreted as a win.

In this survey from Gravity Global, more than half of senior executives felt they were failing in their role when it came to supporting AI initiatives within their companies. The commitment was there and the investment was real, but the data wasn’t.

The right question isn’t “is it working?” — it’s “is anyone actually using it?”

The instinct when you’re accountable for an AI rollout is to jump straight to ROI: did AI video generate more pipeline, did it shorten deal cycles, can we prove it moved the number? Those are valid questions eventually, but chasing them too early either produces misleading answers or leads to analysis paralysis, because the signal isn’t clean until you’ve established a baseline of consistent usage to measure against.

The right starting point is simpler and more honest: is your team actually using the tool? For AI video specifically, that means being able to answer whether reps have created an avatar and generated videos, whether those videos are getting watched, whether usage is consistent over time, or whether people tried it once and dropped off. It also means understanding whether automation is actually being adopted or whether everything is still happening manually.

These aren’t vanity metrics. They’re the leading indicators that tell you whether your rollout has real momentum or whether you’re managing a slow fade without knowing it.

Once those behaviors become consistent, the outcomes tend to follow. When Vidyard embedded AI-powered video across everyday revenue workflows, webinar attendance increased from roughly 30–40% to nearly 50%, and prospecting workflows generated more than 100 additional Sales Accepted Leads per month, contributing over $100K in new monthly revenue. We break down the full numbers and examples in our guide to the ROI of video.

And this is exactly why measurement matters so much early on. 81% of teams say simpler measurement is a key driver of adoption, which tells you something important: people want to track progress and act on that information, they just need tools that make it possible without adding another layer of work to an already full plate.

Visibility doesn’t just help you report, it changes how you coach

When managers have clear, user-level data on AI adoption, the nature of the conversations they’re able to have changes entirely. Instead of asking how someone thinks the team is doing with AI video, the conversation becomes: I can see that six reps haven’t generated a video yet, what’s getting in the way for them? That’s a specific, actionable coaching moment that simply doesn’t exist without the data to prompt it, and it’s the difference between a manager who feels like they’re guessing and one who feels like they’re actually running something.

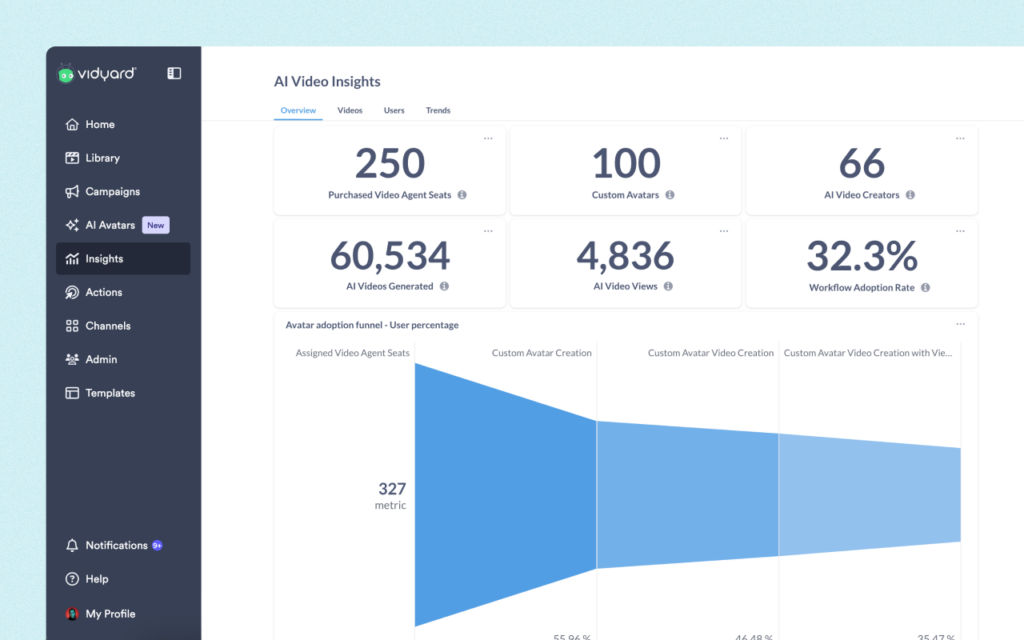

That’s what Vidyard’s AI Video Insights is built for, giving the people responsible for rolling out AI video a clear, in-product view of who’s adopted, who hasn’t started, how individual videos are performing, and whether usage is trending in the right direction over time, all filterable by team, avatar type, and generation method.

The Avatar Adoption Funnel shows you exactly where your rollout is stalling, moving from Purchased Seats through to Custom Avatar Video Viewed. If seats aren’t assigned, that’s a procurement problem. If avatars are created but videos aren’t getting watched, that’s a usage and delivery problem. Each drop-off maps to a specific action. The AI Video Distribution chart sits alongside it, and in a healthy rollout you want that workflow slice growing over time.

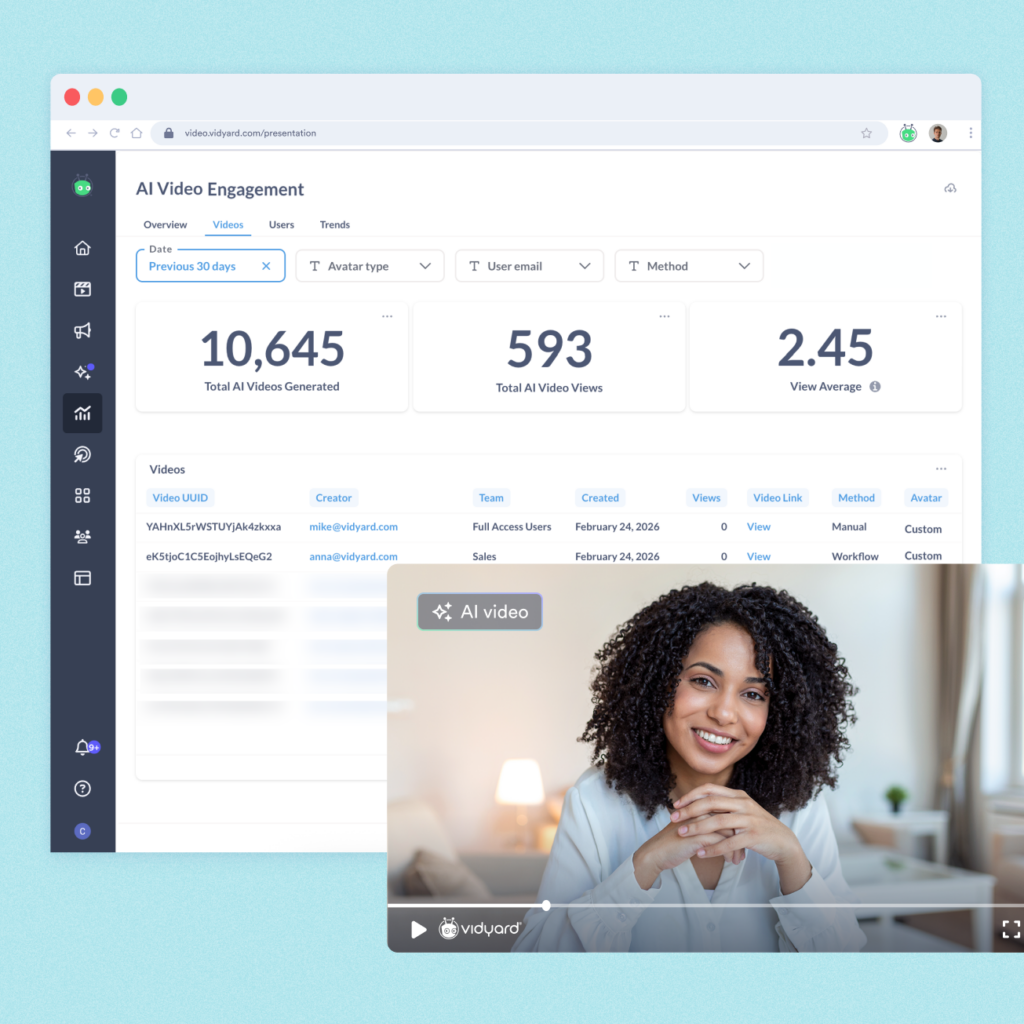

The Videos tab lets you dig into the actual videos themselves, filtering by generation method, avatar type, date range, or individual rep to understand what’s going out and what’s getting traction. A rep with hundreds of videos generated and zero views is a signal worth following up on immediately. And view average across the tab is the closest thing in this dashboard to a leading indicator, because a video that gets watched is an interaction that actually happened.

The Trends tab is where you take all of that and zoom out. Are more people creating videos week over week? Is the workflow line growing relative to manual? If video generation is steady but views are dropping, something is off in delivery or targeting. If both are climbing, the program is working.

A declining line in active creators is an early warning sign that momentum is slipping, and it’s much easier to address early than after a full quarter of inactivity. This is how you tell a story about your rollout over time rather than pointing to a single data point.

Not opinions, not gut feel, just a real and honest picture of where your rollout stands and exactly what needs attention next.

Want a closer look at AI Video Insights? Watch our on demand quarterly product webinar where we walk through each tab, what to look for, and how to use it to run a tighter rollout.

If you’re building out an AI video strategy across your revenue team and want clearer visibility into adoption, usage, and performance, see how AI Video Insights works in practice. Book a demo